| 81%of pharma firms now deploy some form of AI in R&D | 68%of AI initiatives fail due to poor data quality | 14%annual increase in AI use across labs (Pistoia Alliance, 2024) |

The marketing is everywhere. Every LIMS and ELN vendor now claims to be ‘AI-powered.’ Conference keynotes promise autonomous laboratories that run experiments overnight without human oversight. Venture capital poured over $8 billion into AI-driven life sciences platforms in 2025 alone. And yet, when you ask laboratory scientists what AI is actually doing in their day-to-day work right now — the answer is usually more modest, more specific, and far more interesting than the headlines suggest.

This article separates signal from noise. Based on current vendor implementations, peer-reviewed research, regulatory guidance published in 2025 and 2026, and industry surveys, here is an honest picture of where AI in laboratory software is genuinely delivering value today — and where the hype is still running ahead of the reality.

The Honest Baseline: Where Labs Actually Stand in 2026

Before discussing what AI can do, it is worth establishing what most laboratories are actually working with. The data is instructive about the gap between ambition and readiness.

According to the Pistoia Alliance’s Lab of the Future 2024 Global Survey, AI use across laboratories increased by 14% year-over-year — a significant adoption signal. But the same survey revealed that nearly 40% of respondents struggle to make their data FAIR (Findable, Accessible, Interoperable, and Reusable), with inconsistent metadata standards cited as the primary barrier to effective AI implementation. Cisco’s 2024 AI Readiness Index found that fewer than one in three organizations believe their current data infrastructure is prepared for AI at all.

The most telling statistic comes from a broader technology survey: 68% of tech executives cite poor data quality and governance as the primary reason AI initiatives fail. In laboratory environments, this is not an abstract concern — it is the central operational challenge. A LIMS or ELN can only deliver AI-driven insights from the data it contains. If that data is inconsistent, incomplete, or poorly structured, the AI layer amplifies the problem rather than solving it.

| The single biggest predictor of AI success in a laboratory is not the sophistication of the AI layer — it is the quality of the data infrastructure underneath it.Labs that have invested in structured data capture, standardized metadata, and validated LIMS workflows consistently outperform those that attempt to layer AI onto fragmented, inconsistent data systems.Before evaluating AI features in any LIMS or ELN, the first question to ask is: is our data ready? |

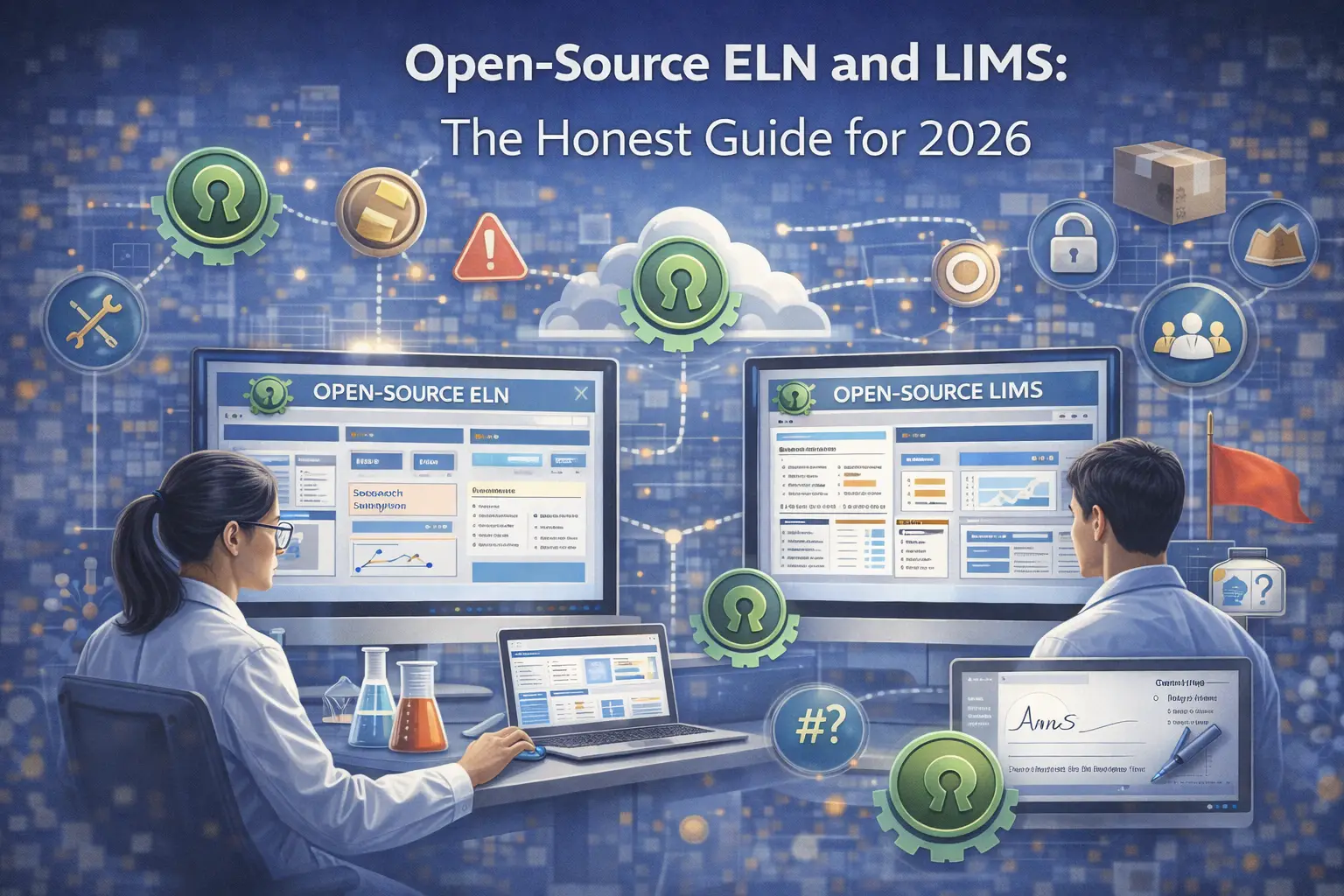

What’s Actually Working: Five AI Applications Delivering Real Value

1. Intelligent Audit Trail Review and Anomaly Detection

In regulated laboratories, audit trail review has historically been a manual, time-consuming quarterly process — exactly the kind of high-volume, pattern-recognition task where machine learning excels. Modern LIMS platforms are beginning to deploy ML models that flag anomalous access patterns, out-of-sequence entries, and statistical outliers in real time, rather than waiting for a monthly review cycle.

The practical impact is significant. Traditional audit trail review is conducted monthly or quarterly and is inherently backward-looking — violations are discovered after the fact. AI-assisted review can flag a suspicious login pattern or an improbable sequence of result entries within minutes of it occurring. For regulated environments operating under 21 CFR Part 11 and ALCOA+ requirements, this shift from periodic to continuous monitoring is not just an efficiency gain — it is a meaningful improvement in data integrity posture.

| Platforms that implement this well integrate the anomaly detection directly into the existing audit trail infrastructure — not as a separate dashboard. Look for systems where AI flags are linked to the specific audit trail record and routable to a QMS deviation workflow. |

2. Predictive Instrument Maintenance

Instrument downtime is one of the most expensive and disruptive operational events in any laboratory. ML models trained on instrument telemetry data — oven temperatures, pump pressures, detector signal baselines, calibration drift patterns — can identify the early signatures of impending failures with enough lead time to schedule preventive maintenance before a breakdown occurs.

This application works because the data is well-structured, high-frequency, and directly correlated with known failure modes. Unlike many AI applications in lab software that require complex data preparation, instrument telemetry is typically already captured in a structured numerical format. The models are relatively straightforward to train, and the ROI is measurable: a single avoided HPLC failure during a critical QC batch can justify months of implementation effort.

3. Automated Data Structuring in ELNs

One of the persistent frustrations with traditional ELN adoption is that scientists use free-text fields to record information that should be structured — instrument parameters entered as prose, concentration values embedded in narrative notes, protocol deviations described in unformatted comments. This unstructured data is technically captured but practically unusable for downstream analysis or cross-experiment comparison.

AI-assisted data structuring addresses this directly. Using natural language processing and large language models, modern ELN platforms can parse free-text entries and propose structured representations — extracting concentration values, reagent identities, and procedural steps into queryable fields. Benchling’s AI layer, launched in late 2025, includes agents specifically designed to clean and restructure legacy unstructured experiment data, making previously siloed historical records searchable and analytically useful.

This is genuinely transformative for organizations with years of ELN data that was captured but never properly structured. A biotech with five years of protein expression experiments recorded in free-text ELN entries can, for the first time, run cross-experiment queries to identify which conditions correlate with the highest yields — without manually re-entering historical data.

4. Conversational Querying of Laboratory Data

Natural language interfaces to laboratory data — the ability to ask ‘which batches failed pH specification in Q3?’ or ‘show me all stability samples due for testing this week’ in plain English — are moving from prototype to production in 2026. Rather than requiring analysts to construct complex database queries or navigate multi-level LIMS menu structures, conversational AI agents translate natural language questions into structured queries against the underlying data model.

The key qualification is that this works reliably only when the underlying data is well-structured and consistently captured — which returns us to the baseline problem identified earlier. A conversational interface on top of inconsistently structured data produces confidently wrong answers, which is worse than no AI at all. For labs that have invested in structured LIMS data models, however, this capability meaningfully reduces the analytical burden on non-technical users and accelerates routine reporting tasks.

5. Protein Structure Prediction and Molecular Biology Tools

In research and biotech R&D environments, AI-driven molecular biology tools represent the most mature and scientifically validated application of AI in laboratory software. AlphaFold’s protein structure prediction — recognized with the 2024 Nobel Prize in Chemistry — has moved from a research demonstration into standard practice. Modern ELN platforms with molecular biology modules now integrate structure prediction, protein-ligand binding estimation, and sequence analysis directly into experiment documentation workflows.

This is the application area where AI in laboratory software has the clearest, most peer-reviewed evidence base. The 2024 Nobel recognition was specifically for work that ‘solved a 50-year-old problem’ in biology — the reliable prediction of protein 3D structure from amino acid sequence. For drug discovery teams, the practical impact has been a dramatic reduction in the time required to generate hypotheses about target binding, with computational predictions guiding which compounds to actually synthesize and test.

The Self-Driving Laboratory: Real, But Not What You Think

The concept of the ‘self-driving laboratory’ — an autonomous research facility where AI designs experiments, robots execute them, and the system iterates toward an objective without human intervention — has generated enormous excitement. It is also frequently misunderstood.

Self-driving labs (SDLs) are real and operational. A 2025 review published in Royal Society Open Science documented their capabilities across chemistry, materials science, and biology, confirming that ‘today’s most capable SDLs automate nearly the entire scientific method.’ Argonne National Laboratory’s robotic system Polybot screened 90,000 material combinations in weeks — a throughput that would require months of intensive human effort. The closed-loop Design-Make-Test-Analyze (DMTA) cycle, in which AI proposes experiments, robotics execute them, and ML models analyze results to design the next iteration, is working at scale in specialized research settings.

The important qualification is context. Current SDLs excel at optimization problems with well-defined objective functions — finding the synthesis conditions that maximize yield, the material composition that maximizes conductivity, the compound concentration that maximizes cell viability. They are powerful tools for navigating high-dimensional parameter spaces where the experimental structure is known and the measurement is automated.

As Drug Target Review noted in its 2025 year-end assessment, no AI-discovered drug has yet achieved FDA approval as of December 2025. The technology has proven its value in accelerating preclinical timelines — but the question of whether it can improve clinical success rates remains unanswered. That distinction matters enormously for how laboratories should plan their AI investments today.

The Regulatory Picture: FDA and EMA Are Moving Fast

Regulators are not standing still. The pace of formal AI guidance from the FDA and EMA in 2025 and early 2026 has been notable — and directly relevant to any laboratory operating in a regulated environment.

In January 2025, the FDA published its first draft guidance specifically addressing AI in drug development: Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products. The guidance establishes a risk-based, context-of-use framework — lower-risk AI applications require minimal documentation while higher-risk applications directly impacting patient safety or product quality require full transparency, prospective validation, and lifecycle monitoring. Final guidance is expected in 2026.

In June 2025, the FDA deployed an internal LLM called ‘Elsa’ across the agency for scientific review and inspection planning. The system can summarize adverse events, perform label comparisons, and assist with clinical protocol review within a secure GovCloud environment. While Elsa is an operational tool rather than a regulatory change, it signals the FDA’s commitment to building internal AI competency — and its ability to process well-structured, compliant submissions more efficiently.

Most significantly for the international laboratory community, in January 2026 the EMA and FDA jointly published 10 Guiding Principles for Good AI Practice across the medicines lifecycle — a landmark joint framework that explicitly covers AI use from early research through manufacturing and post-market safety monitoring. These principles will underpin future guidance in both jurisdictions and represent the first major transatlantic regulatory alignment on AI in life sciences.

| Regulatory Document | Relevance for Lab Software |

|---|---|

| FDA Draft Guidance on AI in Drug Development (Jan 2025) | Context-of-use framework, AI model validation requirements, risk-based documentation |

| EMA–FDA Joint Good AI Practice Principles (Jan 2026) | 10 joint principles covering AI across the full medicines lifecycle |

| FDA — Artificial Intelligence in Drug Manufacturing (2023) | Manufacturing AI applications, process control, quality monitoring |

| Royal Society Open Science — Self-Driving Labs Review (July 2025) | Peer-reviewed overview of SDL capabilities, limitations, and policy implications |

| Pistoia Alliance — Lab of the Future Survey (2024) | AI adoption rates, FAIR data barriers, readiness assessment data |

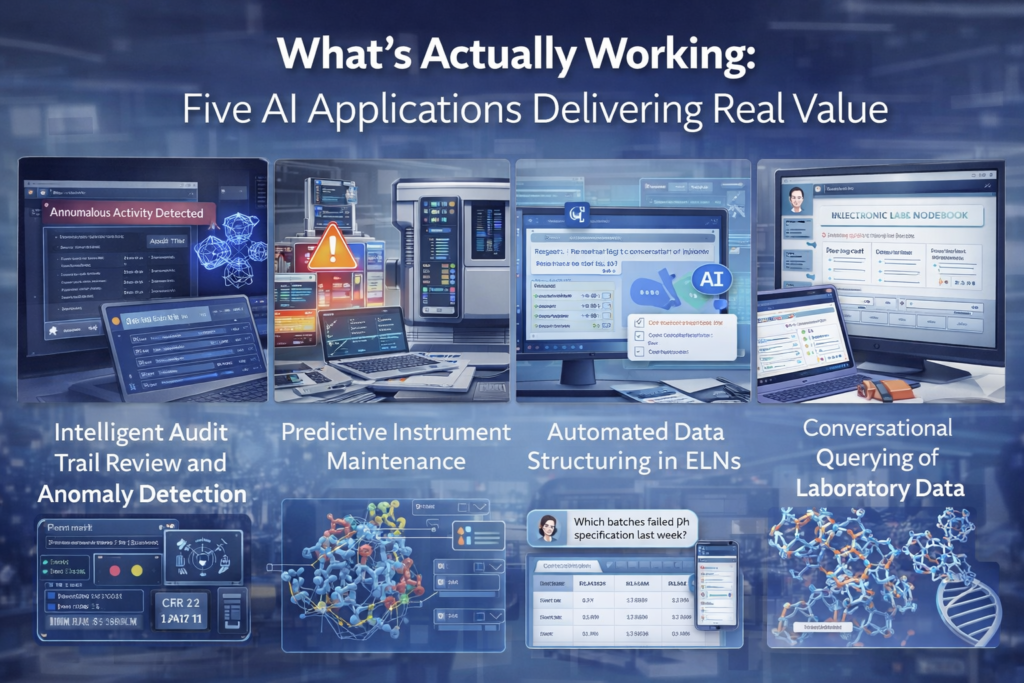

Hype vs Reality: An Honest Assessment

The following table synthesizes what vendors promise, what peer-reviewed evidence supports, and what most laboratories are actually experiencing in 2026.

| AI Capability | Vendor Claims | What’s Actually Working | Reality Check |

|---|---|---|---|

| Audit trail anomaly detection | Real-time compliance monitoring | Reliable pattern flagging on structured audit data | Works well — requires well-configured LIMS with enforced audit trails |

| Instrument predictive maintenance | Zero unplanned downtime | Early warning signals for common failure modes | Proven ROI — best where telemetry data is already captured |

| ELN data structuring (NLP) | Automatic documentation from voice/text | Legacy data cleanup, structured field extraction | Works on well-constrained scientific vocabulary; struggles with novel protocols |

| Conversational data querying | Ask your LIMS anything in plain English | Reliable for common, well-structured queries | Quality of answers is directly proportional to quality of underlying data |

| Protein structure / molecular AI | AI drug discovery | AlphaFold-class structure prediction embedded in R&D platforms | Mature, Nobel-validated — genuinely transformative for biotech R&D |

| Fully autonomous lab (SDL) | Lights-out overnight experimentation | Operational for defined optimization problems in specialized settings | Not applicable to most regulated QC or clinical labs in 2026 |

| AI-generated regulatory reports | One-click compliance documentation | Draft generation for standard report structures | Requires human review and validation — not a replacement for regulatory expertise |

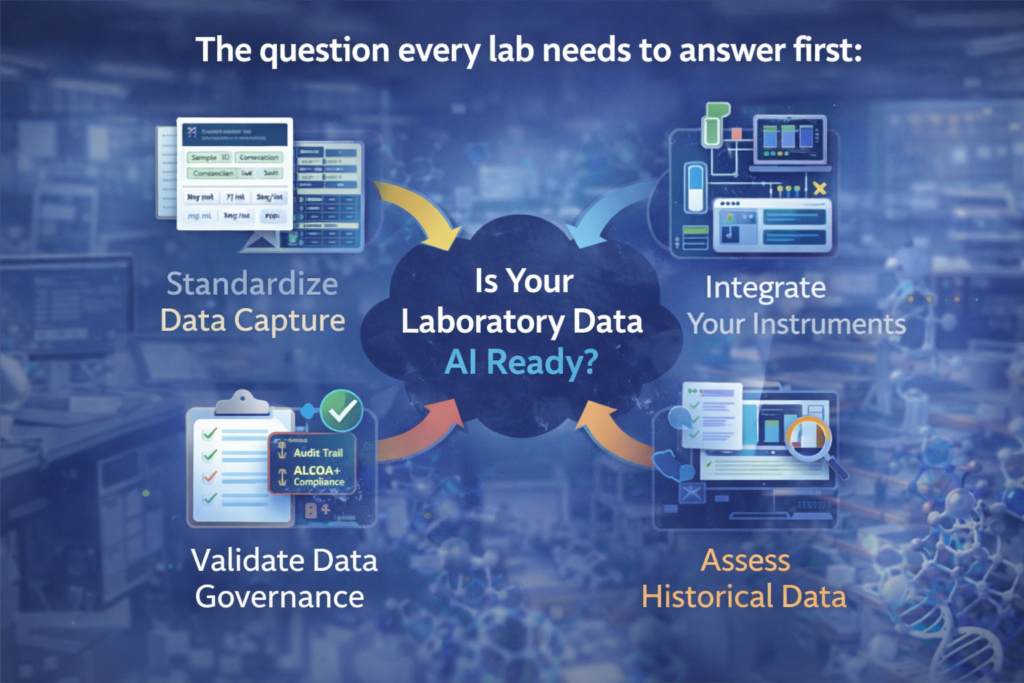

The Question Every Lab Needs to Answer First

Before any conversation about AI features in LIMS or ELN platforms, there is a prerequisite question that determines whether any of those features will deliver value: is your laboratory data structured, consistent, and complete enough to be the fuel for a machine learning model?

The honest answer for most laboratories is: not yet, but it can be. The practical path to AI readiness in a regulated lab runs through four foundational steps, in order.

- Standardize data capture — enforce structured fields over free text, standardize unit representations, define mandatory metadata for every sample type and test method

- Integrate your instruments — direct bidirectional instrument integration eliminates manual transcription and creates the high-frequency, structured data streams that ML models need

- Validate your data governance — ensure your audit trail captures all events, your user accounts are individual and attributable, and your ALCOA+ compliance is verified operationally not just technically

- Assess your historical data — before committing to AI analytics, audit your existing LIMS data for completeness and consistency; AI models trained on inconsistent historical data will produce systematically unreliable predictions

| The labs that are getting the most out of AI in 2026 are not necessarily the ones with the most sophisticated AI platforms.They are the ones that invested earlier in data quality, workflow standardization, and LIMS configuration discipline.AI amplifies what is already in your data — which means it amplifies both the insights and the gaps. |

What to Look for When Evaluating AI in a LIMS or ELN

When vendors demonstrate AI capabilities, the following questions separate genuine functionality from marketing surface area.

- Can you show me the AI working on data structured like ours — not a curated demo dataset?

- Is the AI layer built on the LIMS data model, or is it an external tool that requires a separate data export?

- How are AI-generated suggestions flagged and tracked in the audit trail? Can a regulator distinguish a human entry from an AI-assisted one?

- What happens to AI outputs when the underlying model is updated — is there a change control process?

- Does the AI documentation meet the FDA’s context-of-use framework from the January 2025 draft guidance?

- Is there a validation package (IQ/OQ/PQ) that covers the AI components, not just the core LIMS functionality?

Frequently Asked Questions

Will AI replace laboratory analysts?

Not in the foreseeable future, and not in regulated environments. AI in laboratory software in 2026 is principally automating pattern recognition, data structuring, and routine reporting tasks — functions that free analysts to focus on interpretation, exception handling, and scientific judgment. The FDA’s 2025 guidance explicitly frames AI as supporting regulatory decision-making, not replacing the human accountable for it. The most accurate framing is that AI is changing what analysts spend their time on, not eliminating the need for them.

Does buying an ‘AI-powered’ LIMS mean my lab is AI-ready?

No. A platform with AI features and a lab with AI capability are different things. The platform provides the tools; readiness requires data infrastructure, governance processes, staff training, and validation effort. A LIMS with excellent AI features and poor underlying data quality will produce poor AI outputs. The correct sequence is: prepare your data foundation, then leverage AI features — not the reverse.

How does AI in LIMS interact with 21 CFR Part 11 compliance?

AI-generated outputs in a regulated laboratory environment must meet the same ALCOA+ and Part 11 requirements as any other electronic record. Specifically: AI suggestions must be Attributable (which AI model version generated this output?), the decision to accept or reject an AI suggestion must be captured in the audit trail, and the AI model itself is subject to Computer Software Assurance (CSA) validation under the FDA’s September 2025 CSA final guidance. The AI layer does not exempt a system from Part 11 — it adds additional validation scope.

What is the most practical first AI project for a regulated lab in 2026?

Audit trail anomaly detection or instrument predictive maintenance — whichever corresponds to your lab’s biggest current pain point. Both work on data you are already capturing, have measurable ROI, and do not require extensive new infrastructure. They also produce outputs that are relatively easy to validate and explain to a regulator, which makes them safer first projects than more ambitious applications like autonomous experiment design.

Conclusion: Progress Without Hyperbole

AI in laboratory software is delivering real value in 2026 — in specific applications, for laboratories that have prepared the right data foundation. Audit trail monitoring, predictive maintenance, data structuring, and molecular biology tools are mature enough to justify serious evaluation and implementation planning. The regulatory framework is taking shape rapidly, with landmark FDA and EMA guidance published in 2025 and early 2026 providing the clearest direction the industry has ever had on how AI outputs are expected to be validated and documented.

The honest assessment is that the industry’s progress is significant but uneven. The laboratories seeing the best returns are those that treated AI readiness as a data quality problem first and a technology selection problem second. The most important decision any lab can make about AI in 2026 is not which platform to buy — it is whether its current LIMS data is structured, consistent, and complete enough to be worth analyzing at all.

As Drug Target Review concluded in its 2025 year-end review: the technology has earned its place in the R&D toolkit, while simultaneously demonstrating its current limitations. That balanced perspective — progress without hyperbole — is the right frame for every laboratory evaluating AI investments today.

| This article is part of labsoftwareguide.com’s laboratory technology series.Related reading: What is a LIMS? A Complete Guide | Best ELN Software 2026 | What is ALCOA+? Data Integrity in Laboratory Environments | 21 CFR Part 11: A Practical Guide for Lab Software |

This article is for informational purposes only and does not constitute regulatory, legal, or investment advice. Statistics cited are drawn from publicly available industry surveys and peer-reviewed research. Regulatory guidance is subject to revision — always consult current official sources (FDA.gov, EMA.europa.eu) for compliance decisions.